RFQ

01Part-number led inquiry path

MCX755106AS-HEAT can be used as the direct starting point for quotation, BOM sharing and availability discussion.

Dual-port 400Gb/s InfiniBand & RoCE smart adapter with PCIe Gen5 x16, GPUDirect RDMA, and hardware security offloads for AI, HPC, and cloud data centers.

RFQ

Inquiry ready

Clear path for part number, quantity and BOM-led contact.

EN / AR

Bilingual buyer flow

Useful for procurement teams coordinating technical review across regions.

Datasheet available

The product datasheet can be downloaded directly from this page.

Selected specs

Ordering code

MCX755106AS-HEAT(900-9X7AH-0078-DTZ)

PCIe HHHL (Half Height Half Length), FHHL bracket optional

PCIe Gen5.0 x16 (up to 32 lanes, supporting bifurcation & Multi-Host)

InfiniBand (NDR/HDR/EDR) & Ethernet (400GbE, 200GbE, 100GbE, 50GbE, 25GbE, 10GbE)

Dual-port QSFP-DD (2x 400Gb/s NDR, 800Gb/s aggregate)

NDR 400Gb/s per port, HDR 200Gb/s, EDR 100Gb/s, FDR (compatible)

Integrated in-network memory for rendezvous offload & burst buffer

Deployment fit

AI & Machine Learning Clusters - Large-scale training with NCCL, UCX, and GPUDirect RDMA.

Follow-up path

Quote / Compatibility / Availability

Prepared for part-number led and BOM-led requests

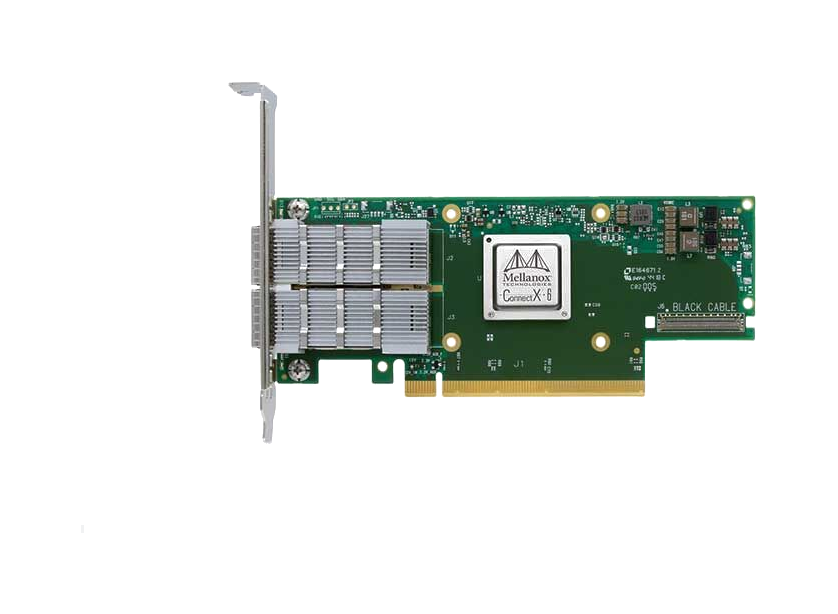

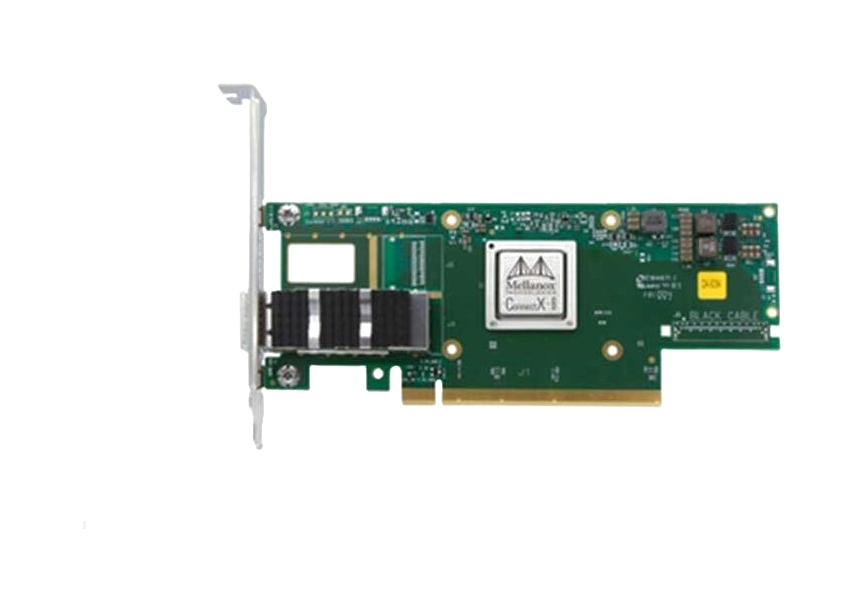

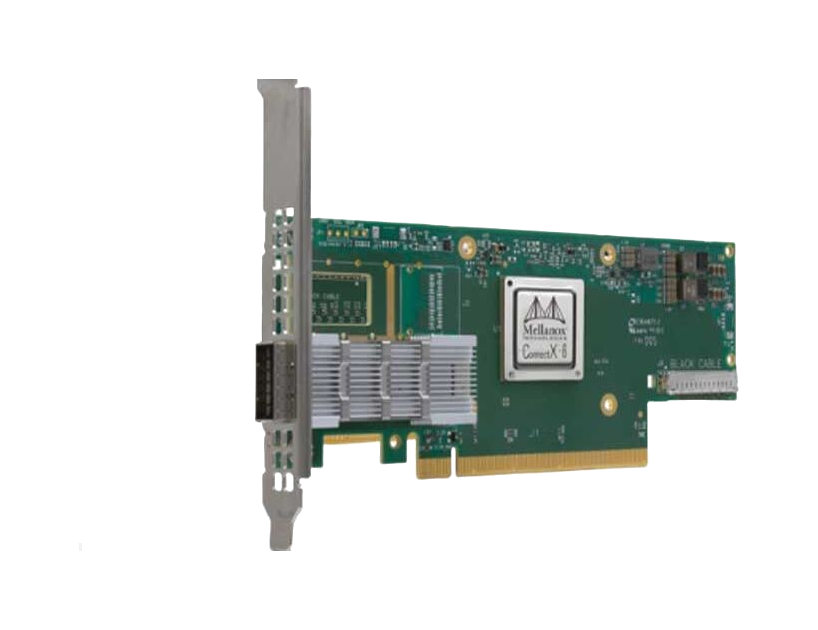

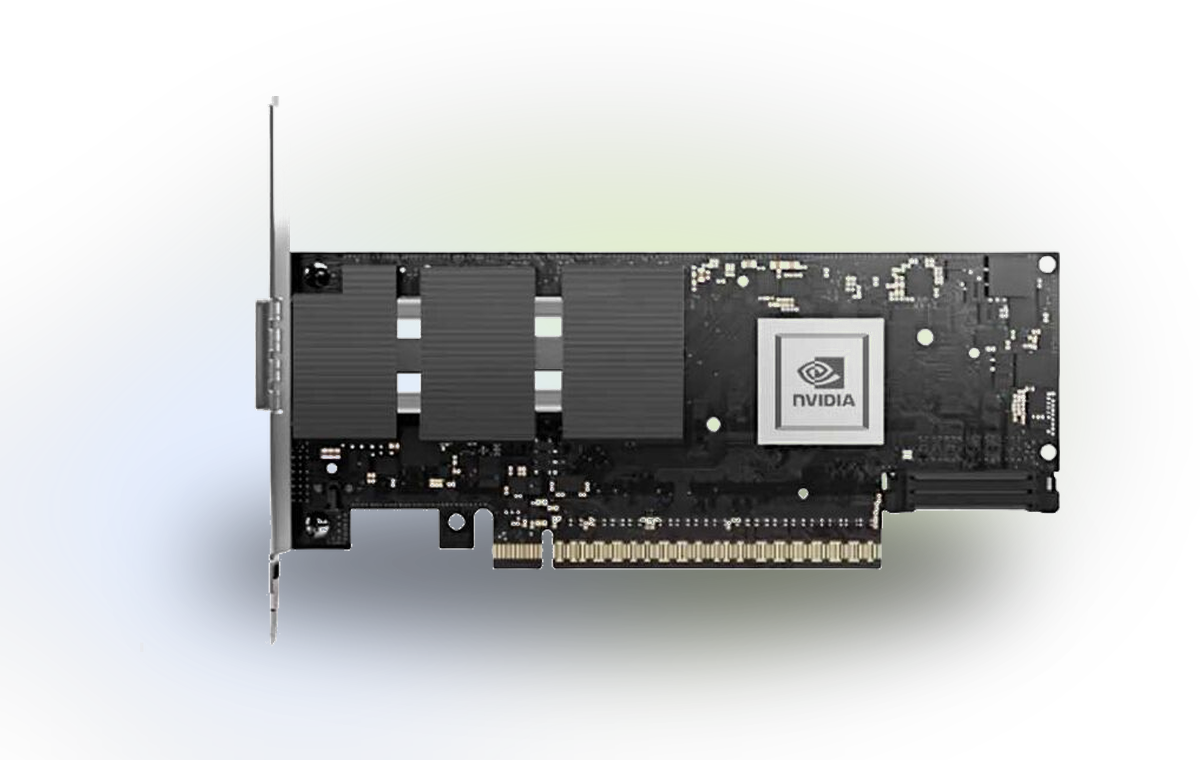

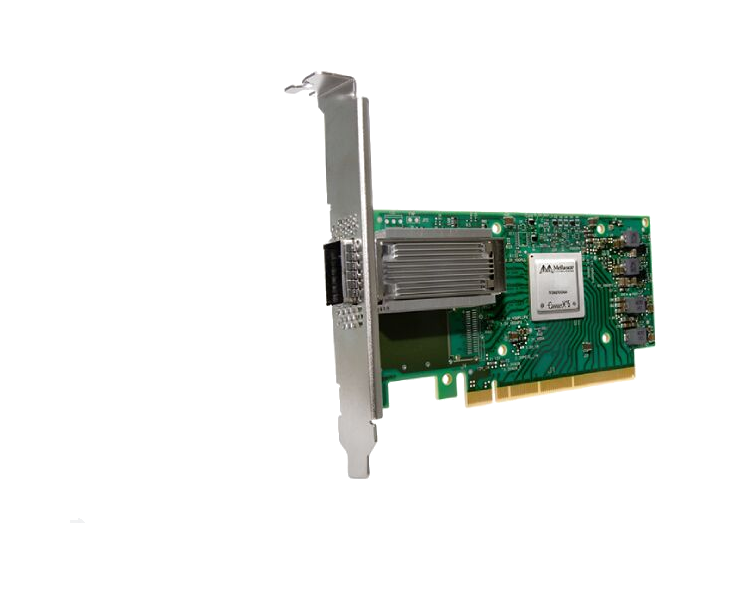

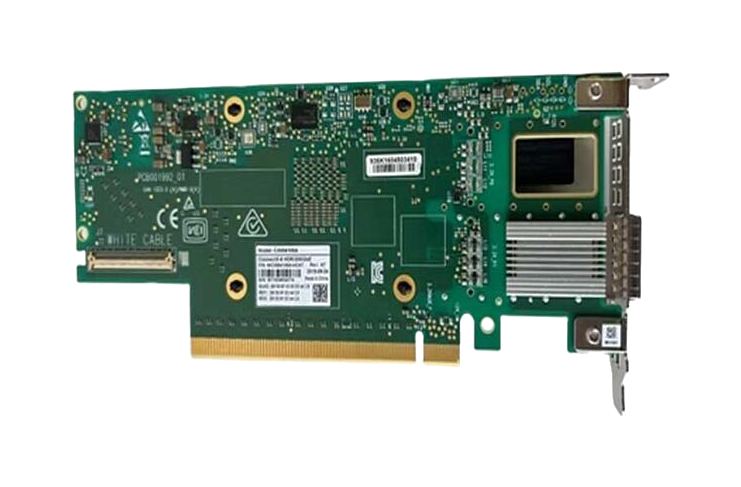

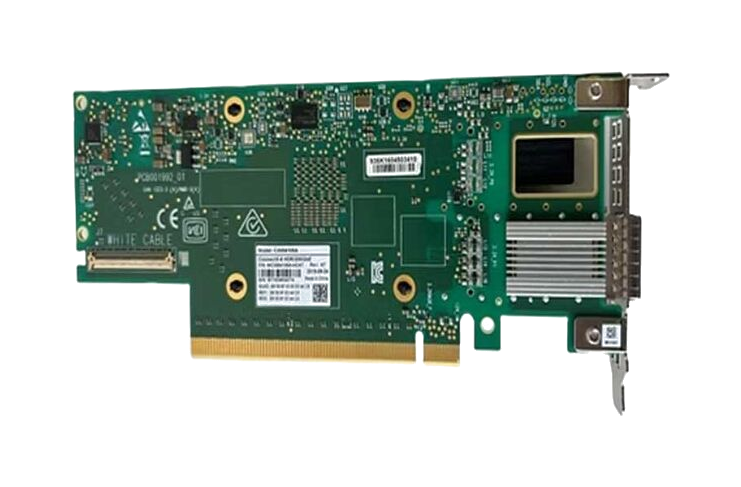

Product media

When product photography is available it appears here for faster review, and a clean technical outline remains useful for early evaluation when imagery is limited.

Overview

The NVIDIA ConnectX-7 family delivers groundbreaking performance with up to 400Gb/s bandwidth per port, supporting both InfiniBand (NDR/HDR/EDR) and Ethernet (up to 400GbE). Model MCX755106AS-HEAT features PCIe Gen5 host interface (up to x32 lanes), dual-port 400Gb/s density, multi-host capability, and advanced engines for GPUDirect RDMA, NVMe-oF acceleration, and inline cryptography. Built for demanding AI training, simulation, and real-time analytics, this adapter reduces CPU overhead while maximizing data throughput and security. With on-board memory for rendezvous offload, SHARP collective acceleration, and ASAP2 SDN offloads, ConnectX-7 transforms standard servers into high-performance network nodes with near-zero jitter and nanosecond-precision timing (IEEE 1588v2 Class C).

Buying flow

This section gives buyers a practical handoff from technical review into quotation or compatibility follow-up.

Start from MCX755106AS-HEAT or the product name to reduce ambiguity before quotation starts.

Share quantity, destination or BOM context so the commercial follow-up is aligned with the project scope.

Use the page as the handoff point for stock discussion, compatibility checks and delivery planning.

Trust signals

The page is designed to reduce the distance between specification review and a real commercial inquiry.

RFQ

01MCX755106AS-HEAT can be used as the direct starting point for quotation, BOM sharing and availability discussion.

Review

02The NVIDIA ConnectX-7 MCX755106AS-HEAT NDR 400Gb/s InfiniBand Smart Adapter page is positioned to support compatibility questions and project-fit review before commercial action is finalized.

Project

03This model can be framed against use cases such as AI & Machine Learning Clusters - Large-scale training with NCCL, UCX, and GPUDirect RDMA. together with quantity and rollout timing.

Language

04The bilingual route supports regional buying teams that need technical review and RFQ handling in two languages.

FAQ

Short answers that give buyers clearer expectations before they move into the quote form.

Yes. This page is designed to move MCX755106AS-HEAT review directly into quotation, availability or BOM discussion.

Yes. The page can be used to frame compatibility questions around AI & Machine Learning Clusters - Large-scale training with NCCL, UCX, and GPUDirect RDMA. before commercial follow-up.

Yes. Related products within the same category give buyers an internal comparison path before they submit an inquiry.

Yes. The inquiry path is designed to accept part numbers, quantity, BOM context and availability requirements in one submission.

Related products

Internal links that support model comparison and adjacent product discovery.

Dual-port 100GbE PCIe adapter with RoCE, 750ns latency, 200Mpps throughput. Ideal for AI, cloud, and storage with NVMe-oF, SR-IOV, and ASAP2 offloads.

Dual-port 10GbE SFP28 adapter with RoCE, SR-IOV virtualization, and VXLAN offloads. Ideal for cloud, storage, and database servers requiring low latency.

High-performance OCP 3.0 SmartNIC with 25/50GbE ports, PCIe Gen4, IPsec encryption, and SDN acceleration for cloud data centers.

Dual-port 25/50GbE SmartNIC with PCIe Gen4, IPsec encryption, and Zero-Touch RoCE. Ideal for cloud, enterprise, and NFV workloads with 75Mpps throughput.

Dual-port 200Gb/s InfiniBand smart adapter with PCIe 4.0 support, hardware encryption, and in-network computing for HPC and AI workloads.

High-performance 400Gb/s dual-port adapter with PCIe 5.0 x16, hardware security offloads, and NVMe-oF support for AI/HPC data centers.

800Gb/s AI networking adapter with PCIe Gen6, InfiniBand/Ethernet support, and GPUDirect RDMA for hyperscale AI data centers.

800Gb/s dual-port AI networking adapter with PCIe Gen6, InfiniBand/Ethernet support, and GPUDirect RDMA for hyperscale GPU clusters.

High-performance 100Gb/s InfiniBand adapter with PCIe 3.0 x16, QSFP28 port, RDMA, and NVMe-oF offloads for HPC and AI workloads.

High-performance dual-port 100Gb/s InfiniBand & Ethernet NIC with RDMA offloads, ideal for HPC, AI, and cloud data centers. PCIe 4.0 ready.

NVIDIA MCX653106A-HDAT ConnectX-6 dual-port 200Gb/s InfiniBand/Ethernet smart adapter.

MCX755106AS-HEAT

Quick quote