Catalog

01Structured family navigation

The catalog is organized for fast movement from family overview into specific model review rather than broad generic browsing.

Products

Use the catalog to move quickly from family exploration into model review and quotation action.

Categories

Families are organized around high-intent buying and specification review workflows.

11 quote-ready models

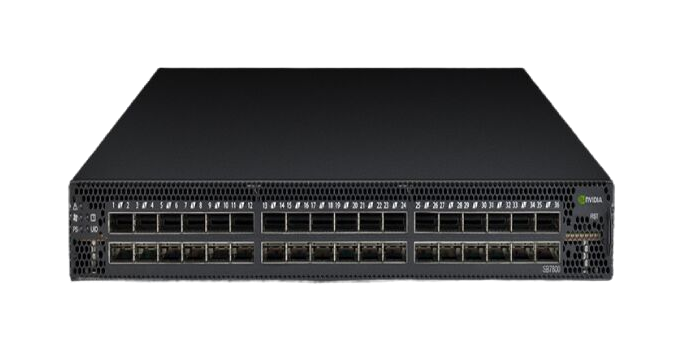

Category landing page for AI fabric switches, spine-leaf architecture planning and high-speed interconnect sourcing.

AI cluster, HPC and high-throughput fabric planning for exact-model RFQs.

Procurement entry

Commercial category page for NVIDIA InfiniBand switches used in AI clusters and high-performance computing fabrics.

9 quote-ready models

Built for data center Ethernet projects that require scalable throughput, reliable switching and compatibility review.

Leaf-spine, top-of-rack and cloud Ethernet refresh shortlisting.

Procurement entry

Explore NVIDIA Ethernet switching options for data center refresh, east-west traffic and high-throughput enterprise networks.

13 quote-ready models

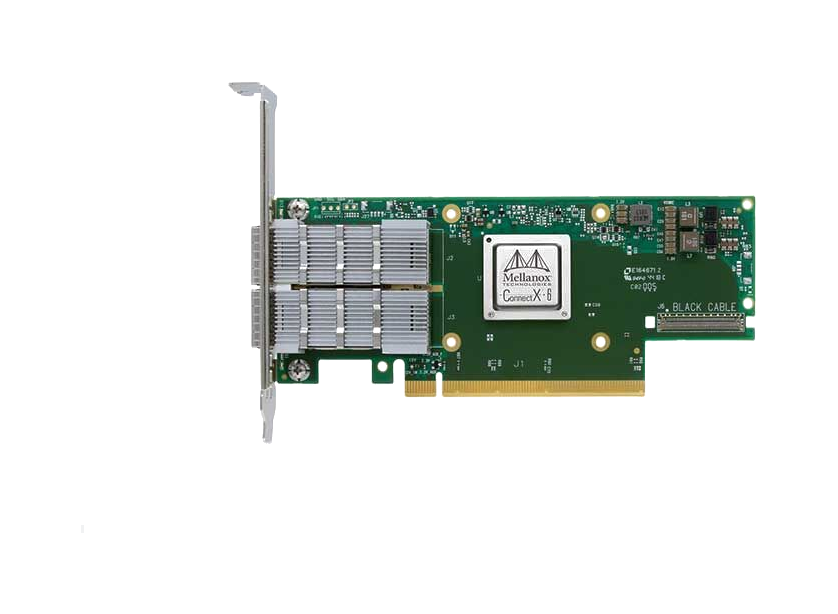

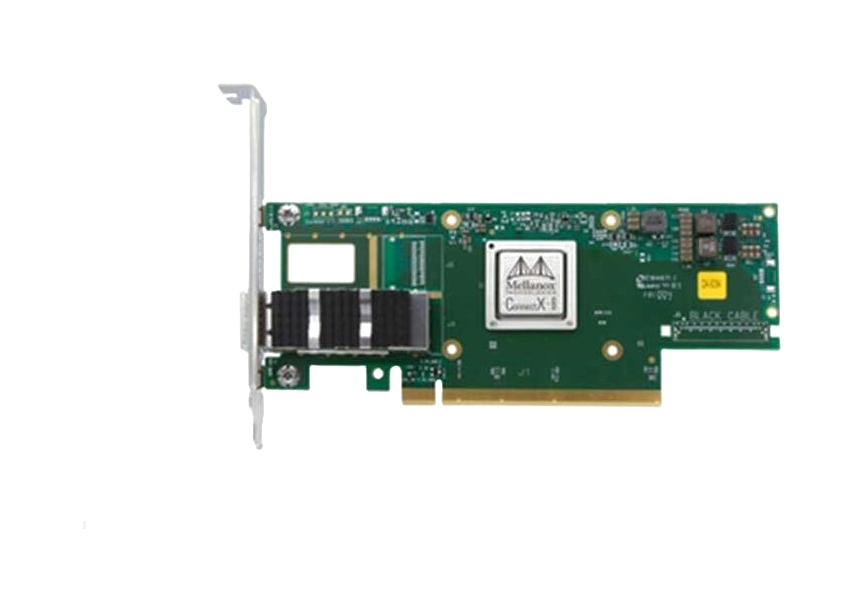

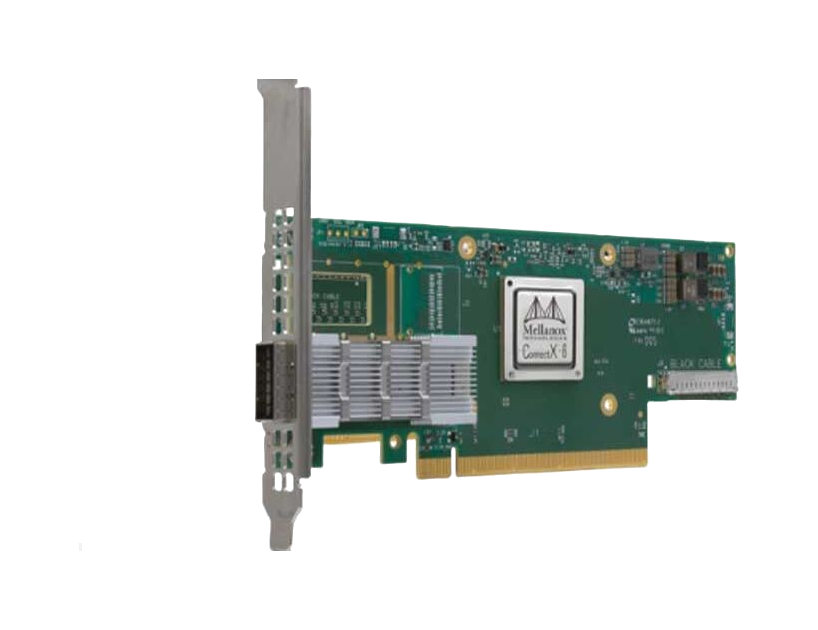

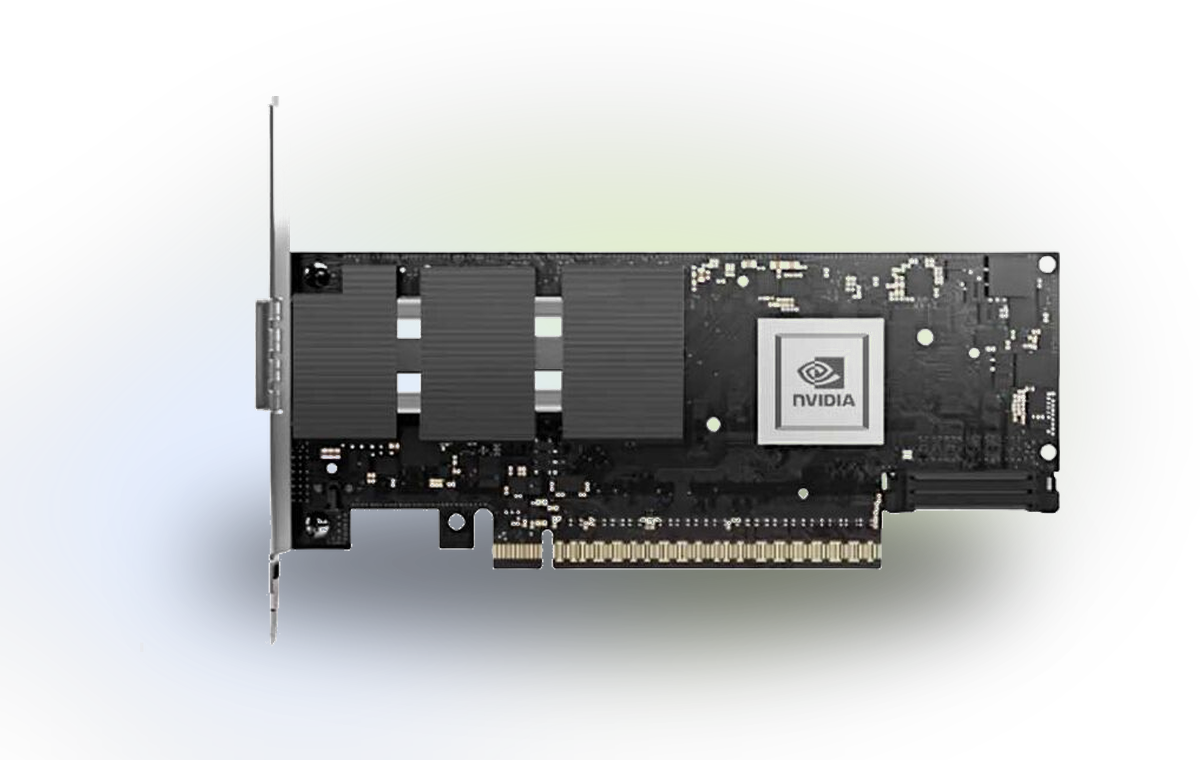

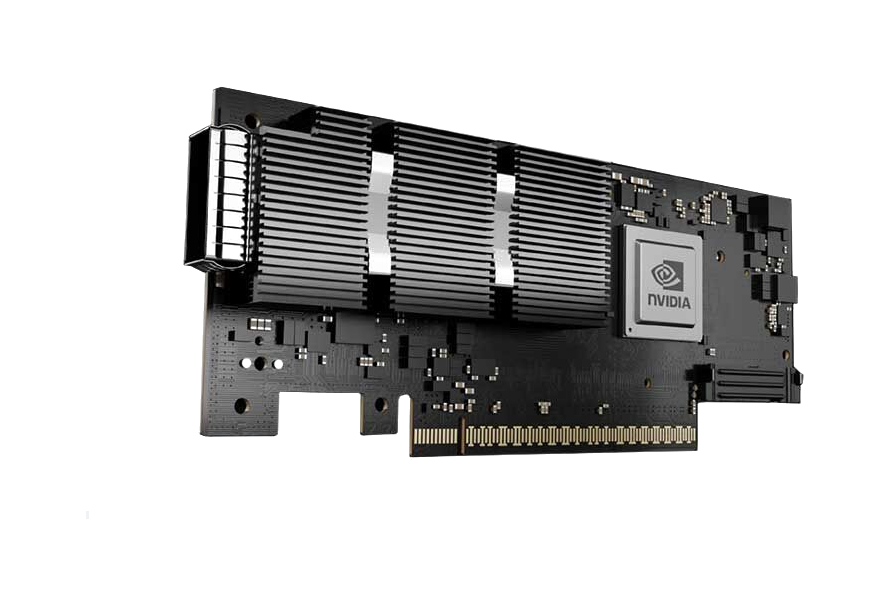

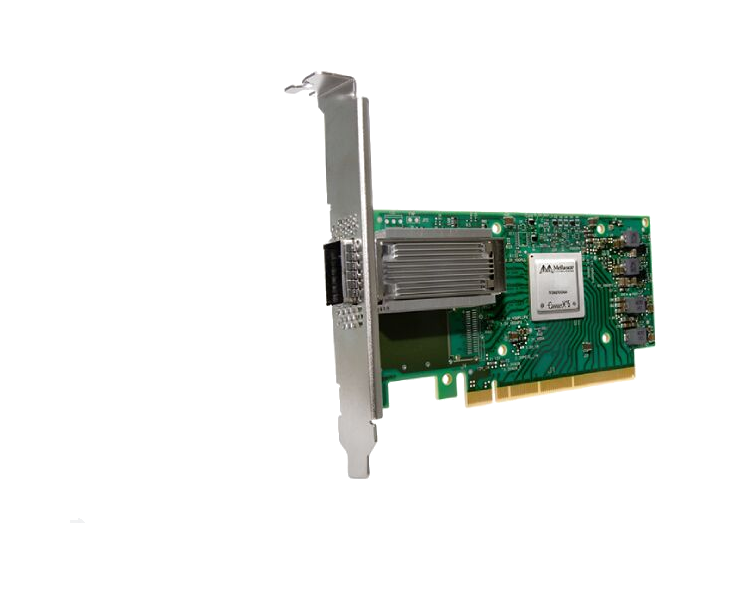

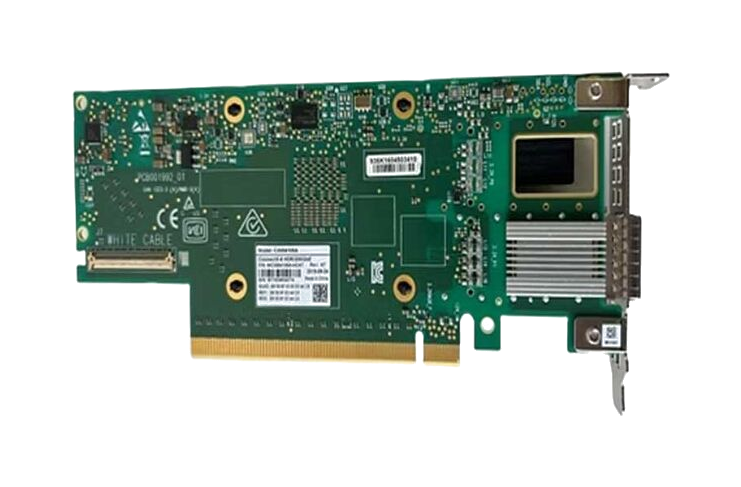

Server adapter category supporting AI nodes, storage fabrics and compatibility-driven procurement workflows.

Host-side networking, SmartNIC selection and accelerator connectivity review.

Procurement entry

Category page for NVIDIA adapters supporting server connectivity, RDMA workloads and high-bandwidth application delivery.

8 quote-ready models

High-speed optical modules for 25G / 100G / 400G / 800G InfiniBand or Ethernet interconnect projects.

Transceiver matching, reach review and interface compatibility planning.

Procurement entry

Suitable for switch-to-adapter and rack-to-rack optical planning with NVIDIA-compatible module options.

10 quote-ready models

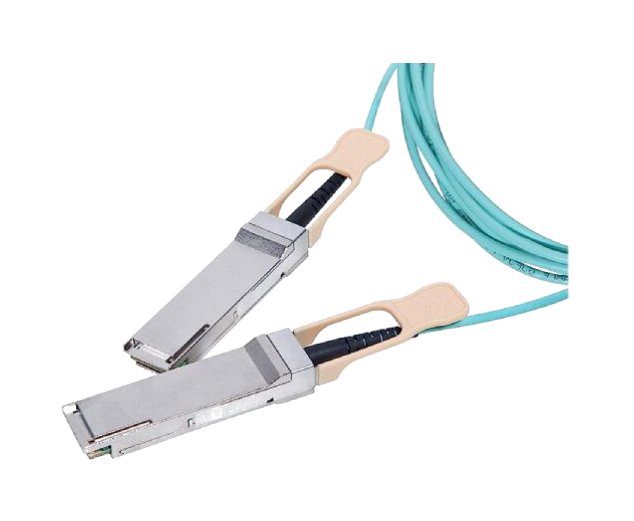

NVIDIA DAC, AOC and structured interconnect products for short-reach and high-speed data center cabling.

DAC, AOC and splitter cable selection for rack and cluster connectivity.

Procurement entry

Useful for validated breakout, passive copper and active optical link planning in GPU clusters.

Catalog trust

This section shows that the catalog is more than a simple list of products; it is a structured entry point for technical review and commercial inquiry.

Catalog

01The catalog is organized for fast movement from family overview into specific model review rather than broad generic browsing.

Models

02Each model card and detail page is designed to bridge technical review into quotation, availability and compatibility requests.

Language

03English and Arabic pages share one clear structure so international teams can review products consistently.

Lead flow

04Catalog browsing, product clicks and quote actions all move buyers toward a clear inquiry path.

Models

Each product entry leads into a focused detail page with specifications, use cases and clear inquiry actions.

Dual-port 100GbE PCIe adapter with RoCE, 750ns latency, 200Mpps throughput. Ideal for AI, cloud, and storage with NVMe-oF, SR-IOV, and ASAP2 offloads.

Dual-port 10GbE SFP28 adapter with RoCE, SR-IOV virtualization, and VXLAN offloads. Ideal for cloud, storage, and database servers requiring low latency.

High-performance OCP 3.0 SmartNIC with 25/50GbE ports, PCIe Gen4, IPsec encryption, and SDN acceleration for cloud data centers.

Dual-port 25/50GbE SmartNIC with PCIe Gen4, IPsec encryption, and Zero-Touch RoCE. Ideal for cloud, enterprise, and NFV workloads with 75Mpps throughput.

Dual-port 200Gb/s InfiniBand smart adapter with PCIe 4.0 support, hardware encryption, and in-network computing for HPC and AI workloads.

High-performance 400Gb/s dual-port adapter with PCIe 5.0 x16, hardware security offloads, and NVMe-oF support for AI/HPC data centers.

Dual-port 400Gb/s InfiniBand & RoCE smart adapter with PCIe Gen5 x16, GPUDirect RDMA, and hardware security offloads for AI, HPC, and cloud data centers.

800Gb/s AI networking adapter with PCIe Gen6, InfiniBand/Ethernet support, and GPUDirect RDMA for hyperscale AI data centers.

800Gb/s dual-port AI networking adapter with PCIe Gen6, InfiniBand/Ethernet support, and GPUDirect RDMA for hyperscale GPU clusters.

High-performance 100Gb/s InfiniBand adapter with PCIe 3.0 x16, QSFP28 port, RDMA, and NVMe-oF offloads for HPC and AI workloads.

High-performance dual-port 100Gb/s InfiniBand & Ethernet NIC with RDMA offloads, ideal for HPC, AI, and cloud data centers. PCIe 4.0 ready.

NVIDIA MCX653106A-HDAT ConnectX-6 dual-port 200Gb/s InfiniBand/Ethernet smart adapter.

High-performance 100G InfiniBand switch with 36 QSFP28 ports, 7.2Tb/s throughput, SHARP acceleration, and P2C airflow for HPC/AI data centers.

High-performance 36-port 100G InfiniBand switch with 7.2Tb/s throughput, ultra-low latency, and UFM-ready for HPC/AI data centers. Features SHARP acceleration.

High-performance 200G InfiniBand switch with 40 QSFP56 ports, 16Tb/s throughput, and SHARP acceleration for AI/HPC clusters. Low latency, managed, RoHS compliant.

High-performance 200G InfiniBand switch with 40 QSFP56 ports, 16Tb/s throughput, and SHARP in-network computing for AI/HPC clusters.

High-performance 40-port 200G InfiniBand switch with 16Tb/s throughput, UFM ready for AI clusters and HPC. Features SHARP acceleration, dual PSU, and sub-130ns latency.

High-performance 200G InfiniBand switch with 40 QSFP56 ports, 16Tb/s throughput, and UFM-ready for AI clusters & HPC data centers.

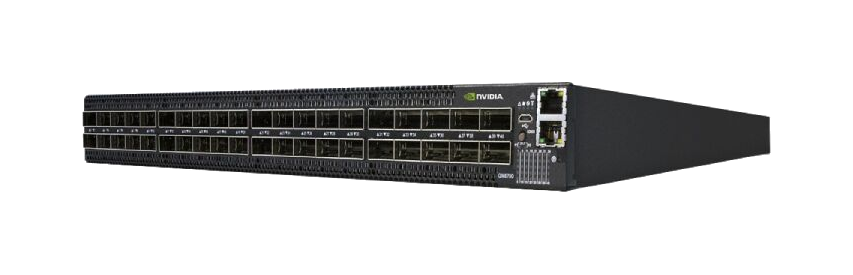

64-port 400Gb/s NDR InfiniBand switch with 51.2Tb/s throughput, SHARPv3 AI acceleration, and C2P airflow. Ideal for HPC and AI workloads.

High-performance 64-port 400G InfiniBand switch with 51.2 Tb/s throughput, SHARPv3 acceleration, and P2C airflow for AI/HPC clusters.

64-port 400G InfiniBand managed switch with 51.2 Tb/s throughput, SHARPv3 acceleration, and ultra-low latency for AI/HPC clusters.

High-performance 400Gb/s InfiniBand switch with 64 ports, 51.2Tb/s throughput, and SHARPv3 acceleration for AI/HPC data centers. 1U rack-mount design.

The MSN2100-CB2F represents the pinnacle of data center switching technology from NVIDIA's Spectrum SN2000 Series. This cutting-edge network switch delivers exceptional performance with 16×100GbE QSFP28 ports and…

NVIDIA Spectrum MSN2100-CB2RC 16-port 100GbE open networking switch with 1.6Tb/s throughput, Cumulus Linux, C2P airflow. Ideal for data center spine and leaf deployments.

Discover NVIDIA Spectrum SN2000 series open networking switches featuring 100GbE performance, low latency, and multiple NOS support for modern data centers.

The NVIDIA Spectrum SN3000 Series MSN3700-VS2F switch delivers 32 ports of 200GbE connectivity with 6.4Tb/s throughput for data center leaf/spine applications. Featuring advanced telemetry and low latency.

NVIDIA Spectrum SN2000 Series SN2201 open networking switch with 48x1GbE RJ45 & 4x100GbE QSFP28 ports. Features Cumulus Linux, low latency, hardware telemetry, and RoCE support for data centers.

The NVIDIA Spectrum SN2100 MSN2100-CB2FC 100GbE open networking switch delivers 1.6Tb/s throughput with 300ns latency. Compatible with various network cards and operating systems. Ideal for data centers and HPC…

High-performance 1U rack switch with 48x25GbE ports & 12x100GbE uplinks. Features 4.8Tb/s capacity, Cumulus Linux OS, and 425ns latency for data centers.

NVIDIA Spectrum SN2000 Series MSN2201-CB2RC open networking switch with 48x1GbE RJ45 & 4x100GbE QSFP28 ports. Features Cumulus Linux, 300ns latency, hardware telemetry, and RoCE support for enterprise data centers.

800G OSFP VR8 multimode optical transceiver with 50m reach on OM4 fiber. Features dual MPO-12 APC connectors, CMIS 5.0 compliance, and supports 800G Ethernet and InfiniBand NDR applications.

Mellanox MMA1B00-E100 40GbE QSFP+ SR4 optical transceiver supports high-speed data transmission up to 150m over OM4 fiber. Features DDM, low power, and hot-pluggable design. Ideal for data centers and enterprise…

Mellanox MFM1T02A-SR 10GbE SFP+ MMF optical transceiver. Supports 10Gb/s, 850nm, LC connector, up to 400m on OM4 fiber. Low power, DDM support, RoHS compliant.

NVIDIA MMA4Z00-NS 800Gb/s dual-port OSFP SR8 optical transceiver for high-density data centers. Supports 2x400G multimode, 50m reach, InfiniBand and Ethernet compatible with advanced thermal management.

NVIDIA MMS4X00-NS 800Gb/s twin-port OSFP DR8 single-mode optical transceiver. Features 2x400G ports, 100m reach, 100G-PAM4, and advanced thermal management for data centers.

The Mellanox MMA2P00-AS is a high-performance, pluggable SFP28 optical transceiver engineered for 25 Gigabit Ethernet networks. It represents a critical optical interconnect component that bridges the electrical…

400Gb/s OSFP SR4 transceiver for ConnectX-7, dual-protocol InfiniBand/Ethernet, 50m OM4 reach, MPO-12/APC connector, CMIS 4.0 compliant.

400Gb/s OSFP transceiver with DR4 single-mode optics, 100m reach, dual InfiniBand/400GbE support, and auto-speed reduction to 200G with splitter cables.

The NVIDIA MFA1A00-C015 is a QSFP28 VCSEL-based active optical cable (AOC) designed for 100Gb/s Ethernet systems. It integrates four multimode fiber transceivers per end, each operating up to 26Gb/s, delivering a total…

50m LSZH 100GbE AOC for data centers with programmable retiming, low power (2.2W), and SFF-8665 compliance. Ideal for Ethernet and InfiniBand EDR systems.

NVIDIA MFP7E20-N015 1:2 splitter cable converts 800G OSFP to dual 400G links. MPO-12/APC connectors, OM4 fiber, 15m length. Ideal for AI clusters & data centers.

High-performance 200G to 2x100G QSFP56 splitter AOC for data centers. Low latency, InfiniBand HDR/200GbE compatible, 10m length. 1-year warranty.

200Gb/s Mellanox MCP1600-E003E26 passive copper DAC cable for HDR InfiniBand. 3m length, low latency, <0.1W power, LSZH jacket. Ideal for HPC and data centers.

200Gb/s QSFP56 active optical cable for InfiniBand HDR & 200GbE. 5m MMF AOC with PAM4 modulation, 5W power, and VCSEL technology. Stock available.

200Gb/s QSFP56 active optical cable for InfiniBand HDR & 200GbE. 10m length, 5.0W power, VCSEL technology, and DDM support.

200Gb/s QSFP56 active optical cable for InfiniBand HDR & 200GbE. 20m reach, 5.0W power, PAM4 modulation. Ideal for HPC & data centers.

30m 200Gb/s QSFP56 active optical cable for InfiniBand HDR & 200GbE. Features PAM4 modulation, 5.0W power, VCSEL tech, and LSZH jacket.

Buy NVIDIA MFA1A00-E020 100Gb/s QSFP28 Active Optical Cable. 20m length, InfiniBand EDR & 100GbE compliant, low power, DDM support. In stock with full support.

AI fabric switching platform for high-performance compute clusters and structured InfiniBand sourcing projects.

Data center Ethernet switching option for east-west traffic, fabric refresh and enterprise workload expansion.

Server connectivity product page focused on adapter sourcing, host compatibility and AI node deployment planning.

FAQ

Short answers that help a buyer understand how to use the catalog before moving into the product page or inquiry form.

Start with the closest product family, then compare the launch models and move into the detail page of the exact part number you want quoted or reviewed.

Yes. Every product card includes a direct quote action so a buyer can jump from catalog browsing into inquiry without extra steps.

Yes. Each entry exposes a technical snapshot, related family context and a path into deeper product detail before submitting an inquiry.