RFQ

01Part-number led inquiry path

MSB7800-ES2F can be used as the direct starting point for quotation, BOM sharing and availability discussion.

High-performance 100G InfiniBand switch with 36 QSFP28 ports, 7.2Tb/s throughput, SHARP acceleration, and P2C airflow for HPC/AI data centers.

RFQ

Inquiry ready

Clear path for part number, quantity and BOM-led contact.

EN / AR

Bilingual buyer flow

Useful for procurement teams coordinating technical review across regions.

Datasheet available

The product datasheet can be downloaded directly from this page.

Selected specs

Deployment fit

Top‑of‑Rack (ToR) Leaf Connectivity – Ideal for connecting compute nodes in small to extremely large clusters, providing high‑density 100Gb/s access layer switching.

Follow-up path

Quote / Compatibility / Availability

Prepared for part-number led and BOM-led requests

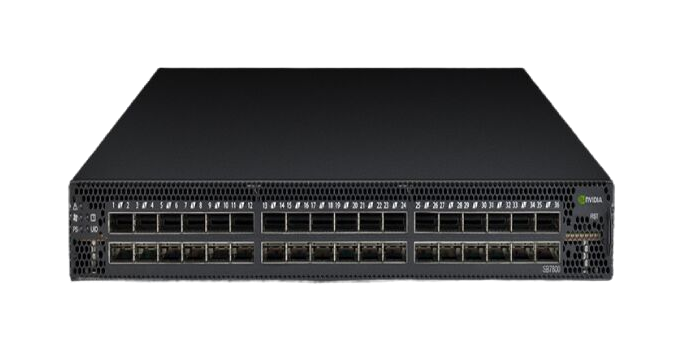

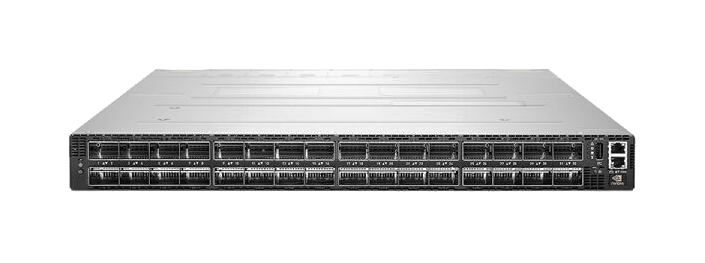

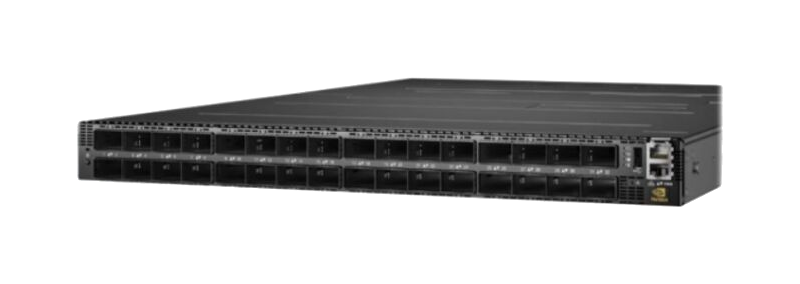

Product media

When product photography is available it appears here for faster review, and a clean technical outline remains useful for early evaluation when imagery is limited.

Overview

The NVIDIA Mellanox MSB7800‑ES2F is a high‑performance 100Gb/s InfiniBand smart switch designed to meet the demanding requirements of modern HPC, AI, and cloud data centers. Part of the NVIDIA SB7800 series, this switch delivers 36 QSFP28 ports operating at 100Gb/s per port, with an aggregate non‑blocking throughput of 7.2 Tb/s and ultra‑low port latency. Built on the proven InfiniBand architecture, the MSB7800‑ES2F provides the ideal top‑of‑rack leaf connectivity solution for small to extremely large clusters. The switch features a fully managed architecture with an onboard dual‑core x86 CPU running MLNX‑OS, enabling comprehensive chassis management of firmware, power supplies, fans, and ports. With support for NVIDIA SHARP in‑network computing technology, the MSB7800‑ES2F offloads collective operations from CPUs to the network fabric, dramatically accelerating MPI and deep learning workloads. The MSB7800‑ES2F variant features P2C (port‑to‑power) airflow, making it suitable for data center cooling architectures where cold air is supplied from the port side.

Buying flow

This section gives buyers a practical handoff from technical review into quotation or compatibility follow-up.

Start from MSB7800-ES2F or the product name to reduce ambiguity before quotation starts.

Share quantity, destination or BOM context so the commercial follow-up is aligned with the project scope.

Use the page as the handoff point for stock discussion, compatibility checks and delivery planning.

Trust signals

The page is designed to reduce the distance between specification review and a real commercial inquiry.

RFQ

01MSB7800-ES2F can be used as the direct starting point for quotation, BOM sharing and availability discussion.

Review

02The NVIDIA Mellanox MSB7800-ES2F 100G InfiniBand Switch 36-Port 7.2Tb/s Managed Switch with P2C Airflow page is positioned to support compatibility questions and project-fit review before commercial action is finalized.

Project

03This model can be framed against use cases such as Top‑of‑Rack (ToR) Leaf Connectivity – Ideal for connecting compute nodes in small to extremely large clusters, providing high‑density 100Gb/s access layer switching. together with quantity and rollout timing.

Language

04The bilingual route supports regional buying teams that need technical review and RFQ handling in two languages.

FAQ

Short answers that give buyers clearer expectations before they move into the quote form.

Yes. This page is designed to move MSB7800-ES2F review directly into quotation, availability or BOM discussion.

Yes. The page can be used to frame compatibility questions around Top‑of‑Rack (ToR) Leaf Connectivity – Ideal for connecting compute nodes in small to extremely large clusters, providing high‑density 100Gb/s access layer switching. before commercial follow-up.

Yes. Related products within the same category give buyers an internal comparison path before they submit an inquiry.

Yes. The inquiry path is designed to accept part numbers, quantity, BOM context and availability requirements in one submission.

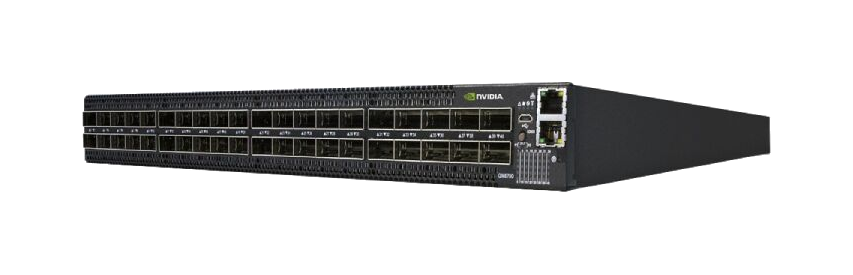

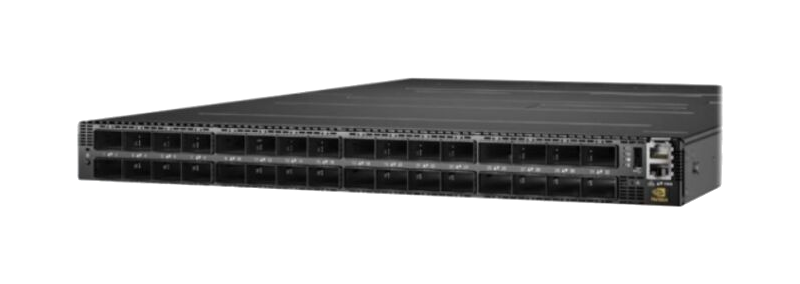

Related products

Internal links that support model comparison and adjacent product discovery.

High-performance 36-port 100G InfiniBand switch with 7.2Tb/s throughput, ultra-low latency, and UFM-ready for HPC/AI data centers. Features SHARP acceleration.

High-performance 200G InfiniBand switch with 40 QSFP56 ports, 16Tb/s throughput, and SHARP acceleration for AI/HPC clusters. Low latency, managed, RoHS compliant.

High-performance 200G InfiniBand switch with 40 QSFP56 ports, 16Tb/s throughput, and SHARP in-network computing for AI/HPC clusters.

High-performance 40-port 200G InfiniBand switch with 16Tb/s throughput, UFM ready for AI clusters and HPC. Features SHARP acceleration, dual PSU, and sub-130ns latency.

High-performance 200G InfiniBand switch with 40 QSFP56 ports, 16Tb/s throughput, and UFM-ready for AI clusters & HPC data centers.

64-port 400Gb/s NDR InfiniBand switch with 51.2Tb/s throughput, SHARPv3 AI acceleration, and C2P airflow. Ideal for HPC and AI workloads.

High-performance 64-port 400G InfiniBand switch with 51.2 Tb/s throughput, SHARPv3 acceleration, and P2C airflow for AI/HPC clusters.

64-port 400G InfiniBand managed switch with 51.2 Tb/s throughput, SHARPv3 acceleration, and ultra-low latency for AI/HPC clusters.

High-performance 400Gb/s InfiniBand switch with 64 ports, 51.2Tb/s throughput, and SHARPv3 acceleration for AI/HPC data centers. 1U rack-mount design.

MSB7800-ES2F

Quick quote